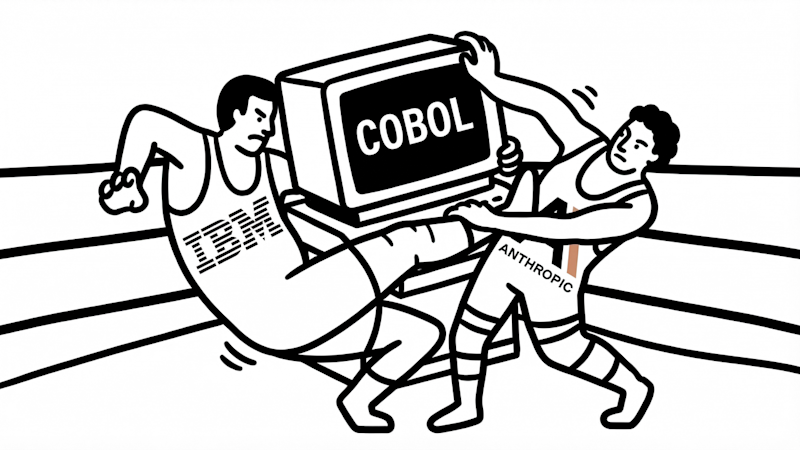

Anthropic ditches its core safety promise in the middle of an AI red line fight with the Pentagon

Key Points:

- Anthropic, an AI company founded by former OpenAI employees focused on AI safety, is loosening its strict safety principles by replacing its binding Responsible Scaling Policy with a more flexible, nonbinding safety framework to better compete in the fast-growing AI market.

- The company removed its previous rule to pause training more powerful AI models if their capabilities outpaced Anthropic’s ability to control them, arguing that unilateral pauses could lead to a less safe world if competitors continue unchecked.

- This policy shift coincides with pressure from the Pentagon, which threatened to blacklist Anthropic and cancel a $200 million contract unless the company rolled back its AI safeguards; however, Anthropic remains firm on concerns regarding AI use in weapons and mass surveillance.

- Anthropic’s new