How much does distillation really matter for Chinese LLMs?

Key Points:

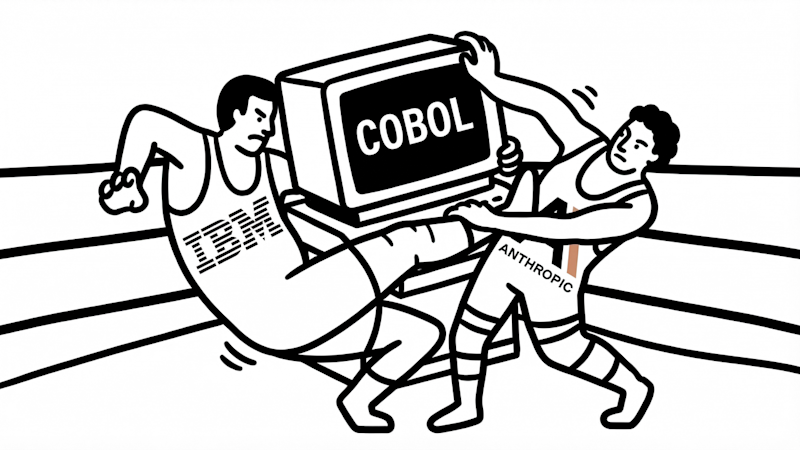

- Anthropic has publicly accused three Chinese AI labs—DeepSeek, Moonshot, and MiniMax—of conducting large-scale distillation campaigns using their Claude models, generating over 16 million exchanges via fraudulent accounts to illicitly extract capabilities.

- Distillation, a common AI training method involving using outputs from stronger models to train weaker ones, is a significant shortcut for improving models, especially for labs with limited compute resources; however, its impact varies widely depending on implementation and data quality.

- The scale of usage differs among the accused labs: DeepSeek’s usage was relatively small (~150,000 exchanges) with limited impact, while Moonshot and MiniMax engaged in much larger operations (3.4 million and 13 million exchanges respectively), potentially improving