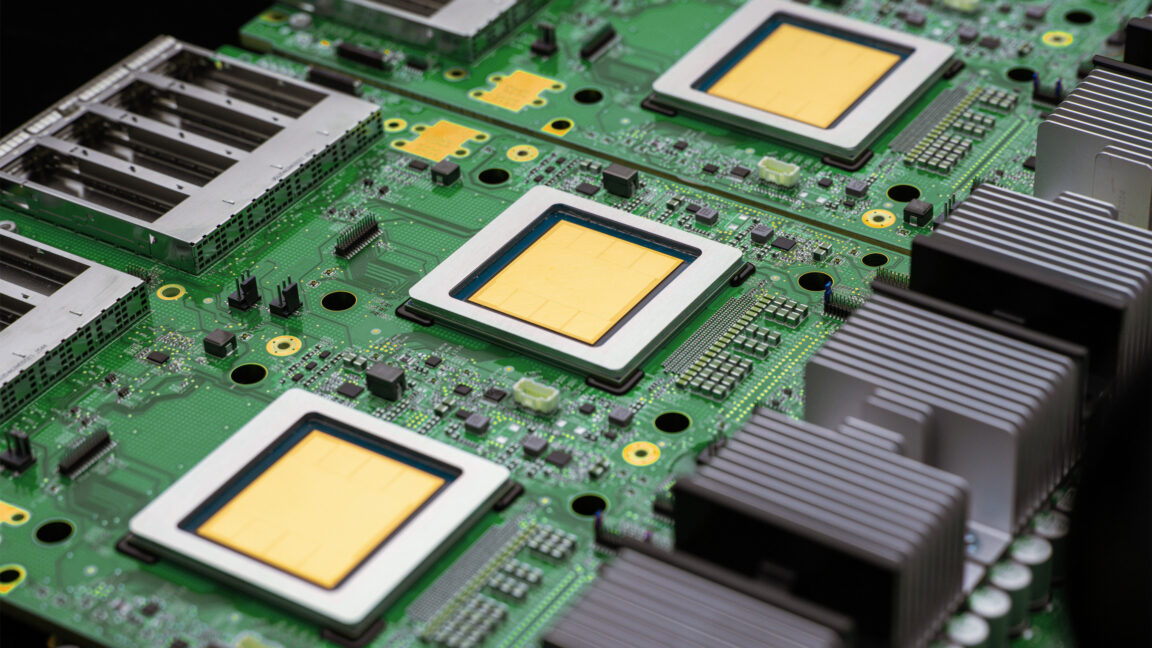

Google unveils two new TPUs designed for the "agentic era"

Key Points:

- Google has introduced its eighth-generation custom Tensor Processing Units (TPUs), the TPU 8t for AI model training and TPU 8i for inference, designed to improve speed and efficiency compared to previous versions.

- The TPU 8t significantly reduces AI training times from months to weeks, featuring up to 9,600 chips per server cluster ("pod") with 121 FP4 EFlops of compute, nearly tripling the training capacity of the prior Ironwood TPU.

- TPU 8i chips are optimized for inference tasks, with larger pods of 1,152 chips and increased on-chip SRAM to better handle longer context windows, improving efficiency during AI model deployment.

- Google emphasizes energy efficiency with these new TPUs, claiming twice the performance per watt over the previous generation and enhanced data center cooling systems using liquid cooling with active water flow control.

- These TPUs will power Google’s future Gemini AI agents and are compatible with popular AI development frameworks, positioning Google as a strong competitor to Nvidia in the AI accelerator market despite Nvidia’s recent stock fluctuations.